Apple dreams of a version of Siri that can handle complex requests like “Find the photo from Saturday, crop the background, and send it to Mom” in a single command, without the need for follow-up questions or manual switching between apps. This capability lies at the heart of the comprehensive Siri overhaul scheduled to be unveiled at the Worldwide Developers Conference (WWDC) on June 8 as part of iOS 27. The ability to issue multiple commands in one sentence is the key feature of this update, but the real problem is that it doesn’t work reliably yet.

The Three-Link Execution Chain

At WWDC 2024, Apple defined the Siri upgrade in three parts: deeper linguistic understanding, contextual awareness linked to personal data, and expanded ability to take actions within and across apps. The first two layers enable Siri to “understand,” while the third layer is the true test of success or failure.

Natural language understanding means Siri’s ability to parse instructions even when the user stumbles over their words, a real shift from the old keyword system. As for context awareness, it requires Siri to be aware of what is on the screen and access personal content like photos and messages to understand what is meant by phrases like “the photo from Saturday.”

But execution is the weakest link; Siri must invoke structured actions in the correct sequence across apps it doesn’t always control. This is not a problem of language models, but of the reliability of the systems themselves, as beta versions of iOS 26.5 revealed that Siri still misinterprets requests or crashes when performing complex tasks.

The Challenge of Third-Party Apps and the App Intents Framework

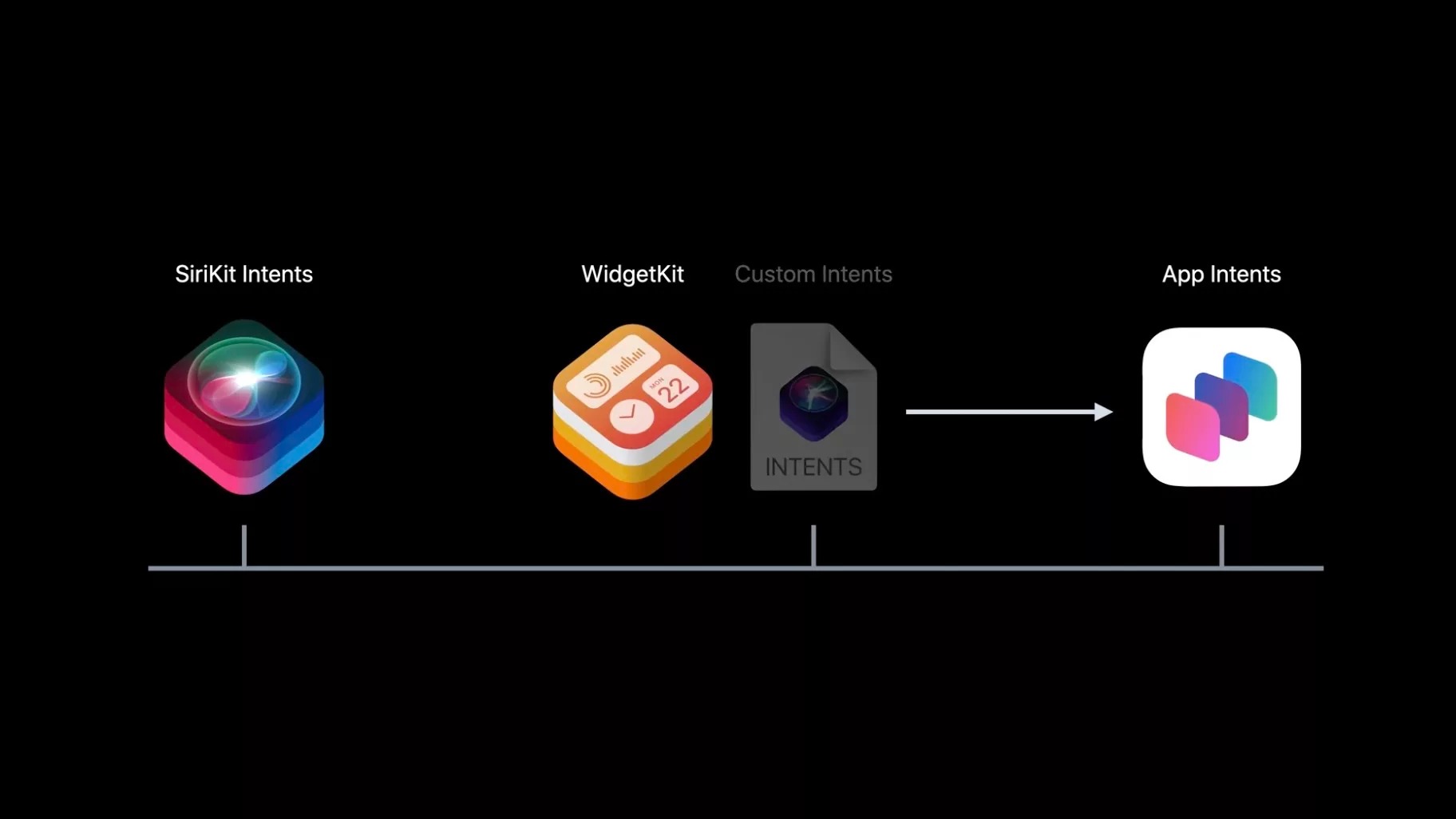

For every step in a sequential command, Siri needs a structured path within the relevant app. Apple has built this through the “App Intents” framework, which covers hundreds of actions. Within Apple apps like Photos, Messages, and Mail, integration is tight because Apple controls the system from end to end.

For context, App Intents are the technology that acts as the “translator” or “bridge” between system intelligence, such as Siri or the Shortcuts app, and the functions within your apps.

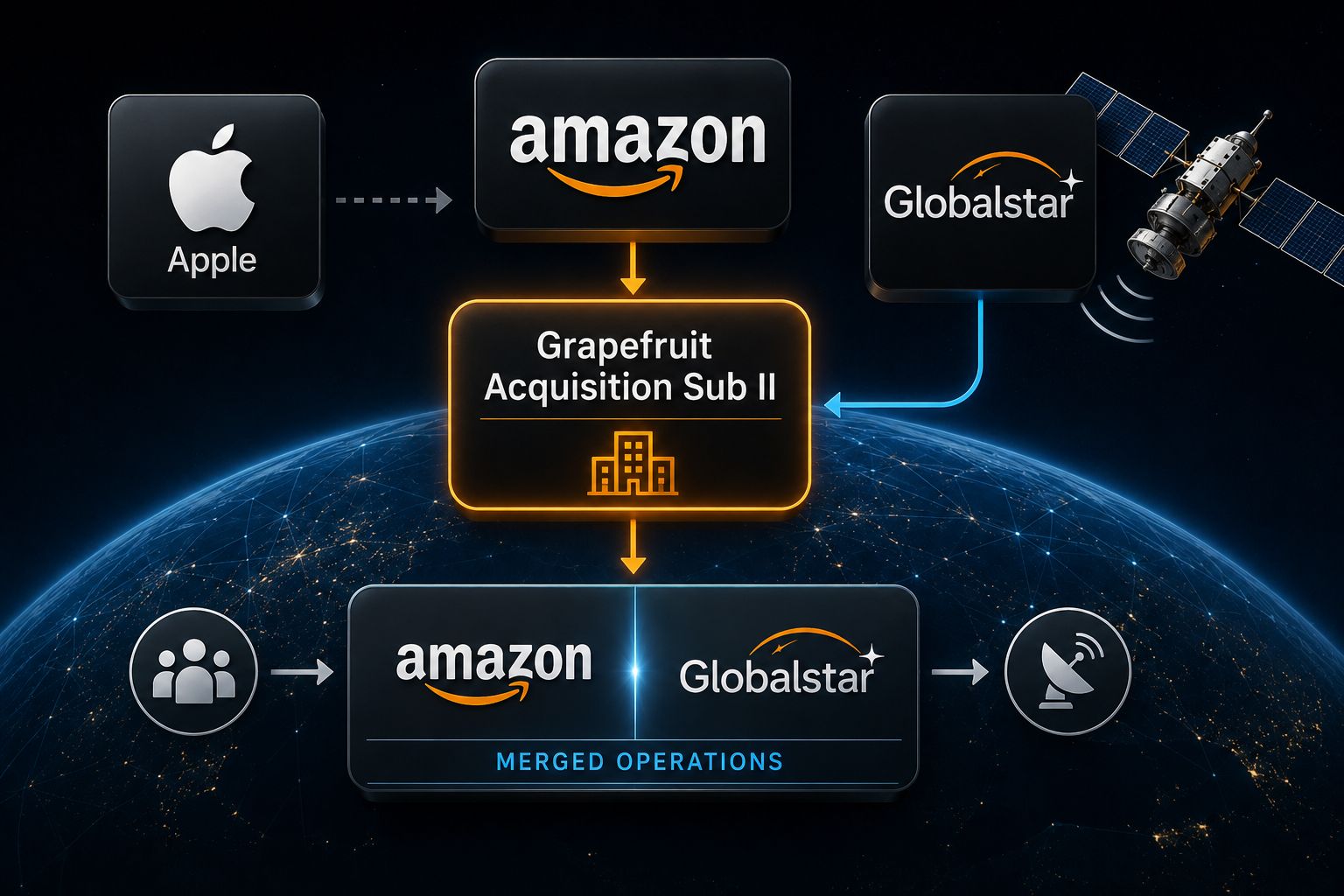

However, for third-party apps, the capability depends entirely on developers adopting this framework. The gap here is clear; a request like “Find the photo, crop it, and send it” might work across Apple apps, but a request involving an external app like Dropbox might stumble if the developer hasn’t implemented the necessary requirements. Failure here doesn’t always provide a clear error; execution might simply stop halfway, leaving the user confused.

Launch Delays Indicate the Difficulty of the Task

Apple has officially delayed the “more personalized” version of Siri several times, and it was targeted for release in previous updates like iOS 26.4, which was already released without the new Siri features. This pattern of delay points to a structural difficulty in the system; making Siri understand a complex sentence in a test environment is one thing, but making it execute three consecutive tasks accurately on millions of devices with unexpected inputs is something else entirely.

Reports indicate that the rollout may extend between iOS 26.5 and the arrival of iOS 27, suggesting that the execution layer is not consistent enough to be launched as a single, integrated feature at this time. It seems Apple is releasing what it trusts now and continues to work on strengthening the remaining parts that are still struggling.

Upcoming Developers Conference

Apple will certainly dazzle us with a Siri demo on June 8, and everything will look perfect because the presentation is prepared and tested in advance. But the presentation is not what matters; the real test is what Apple will say about reality. What matters is knowing which apps Siri will actually support and what tasks it can perform accurately from day one. This is the difference between a mere “promotional demo” and a real feature we use in our daily lives.

If Siri can turn our normal speech into actions and multiple steps within apps without us touching the screen, it will be a major and revolutionary change in how we use the iPhone. The real question now is not about Apple’s desire to achieve this, but whether the system has finally become robust and ready to execute these complex tasks and fulfill the promises we have heard from the company over the past two years.

Source:

Leave a Reply