We all have those personal projects we started with great enthusiasm, only to leave them gathering dust in our computer folders due to the pressures of life. The Japanese have a wonderful word called “Tsundoku,” which describes the pile of books you buy with the intention of reading one day, but they accumulate without ever being opened. Well, as programmers and tech enthusiasts, we have our own “Tsundoku” of incomplete code. These abandoned projects are the perfect candidates for testing the capabilities of AI-powered coding assistance tools; after all, we would never have finished them without these assistants.

The Idea and Preparation

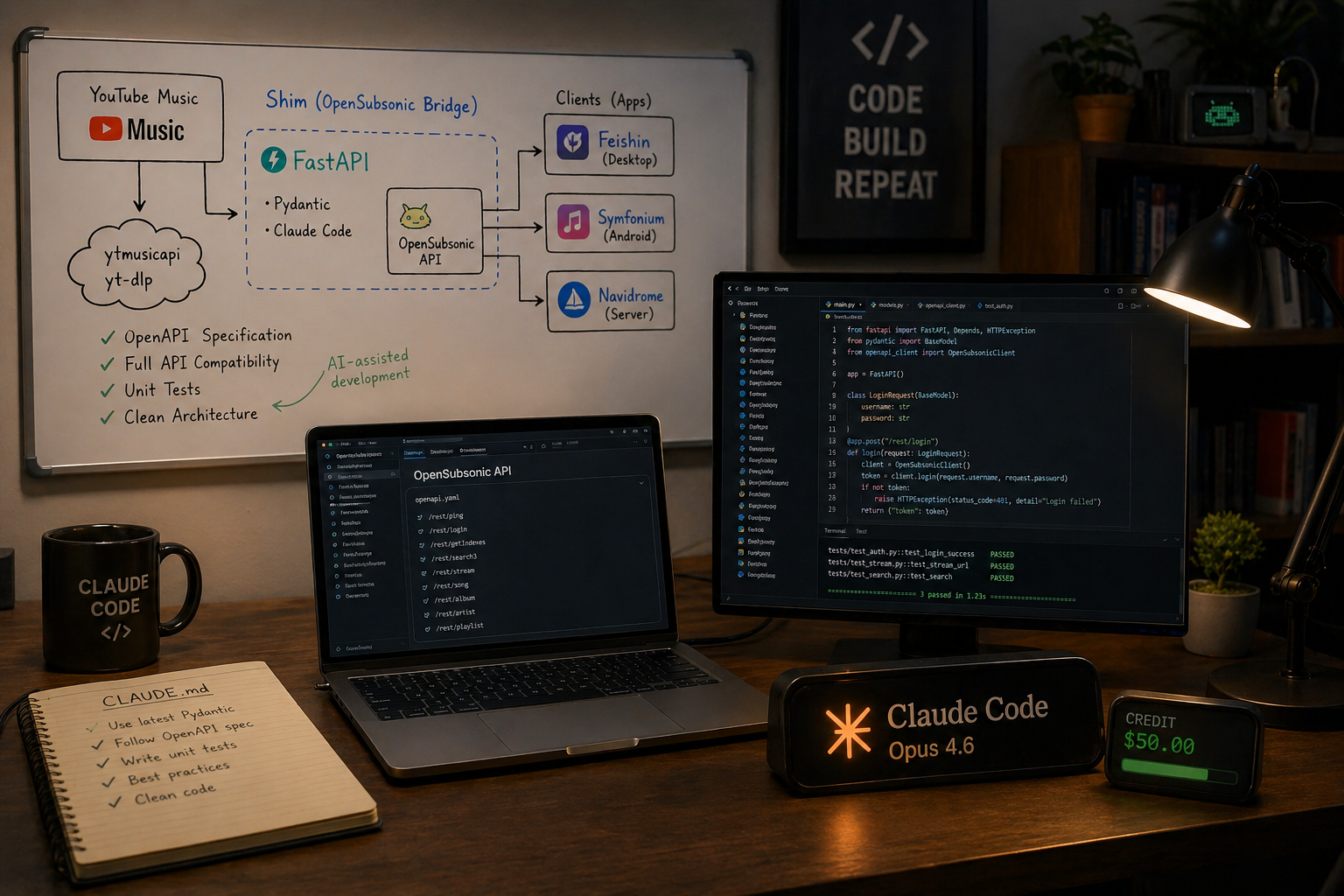

In the past, I started a project that was a shim layer connecting “YouTube Music” to the “OpenSubsonic” API. In short, OpenSubsonic is a fantastic software contract that separates music streaming servers from the apps that play them, allowing you to choose the combination you like. For me, I prefer using Navidrome as a server, the Feishin app on computers, and Symfonium on Android. The idea was to make YouTube Music compatible with this API so I could add it to any of my favorite apps. Although getting the basic streaming working was easy using ytmusicapi and yt-dlp, implementing all the required functionality was tedious, and as usual, new projects stole my attention.

Fortunately, there was nothing innovatively complex about this streaming project, and there were clear specifications to follow, making it perfect for testing AI-assisted programming. I decided to test Claude Code (Opus 4.6 model), taking advantage of a $50 free credit, to build the project from scratch. I set up the work environment using FastAPI and Pydantic, and provided Claude with the OpenSubsonic OpenAPI specification file, as well as a CLAUDE.md file containing strict guidelines, such as using the latest Pydantic techniques and writing professional unit tests, to prevent it from repeating common mistakes.

Building the MVP

With everything set up, I let Claude take the lead. The workflow boiled down to entering planning mode, requesting the next part, reviewing and correcting the plan, and then giving it the necessary resources to research when it hit obstacles. My first request was straightforward: “Take a look at the openapi.json file. Implement a server using FastAPI that creates stubs for all the required functions, focusing only on modern JSON paths.”

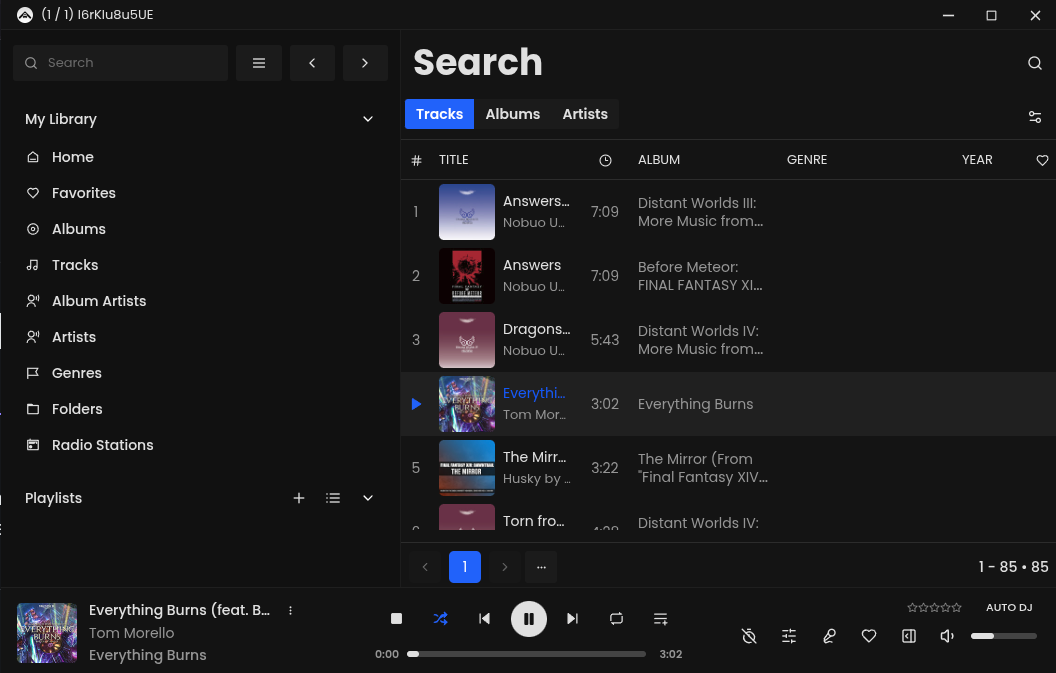

Even with clear specifications, Claude made some mistakes at first, but it managed to correct them on the second attempt. The next big step was requesting to connect the app, search for a song, and stream it. I quickly got a logical implementation, but it stumbled when trying to actually connect to the Feishin app. This is where iteration and adjustment came in; I ran the app and passed the error logs to Claude Code. Amazingly, after a few iterations, I was actually listening to music streaming through Feishin! The main obstacles were the need to return empty but correctly structured responses instead of returning nothing at all.

Final Touches and Tedious Tasks

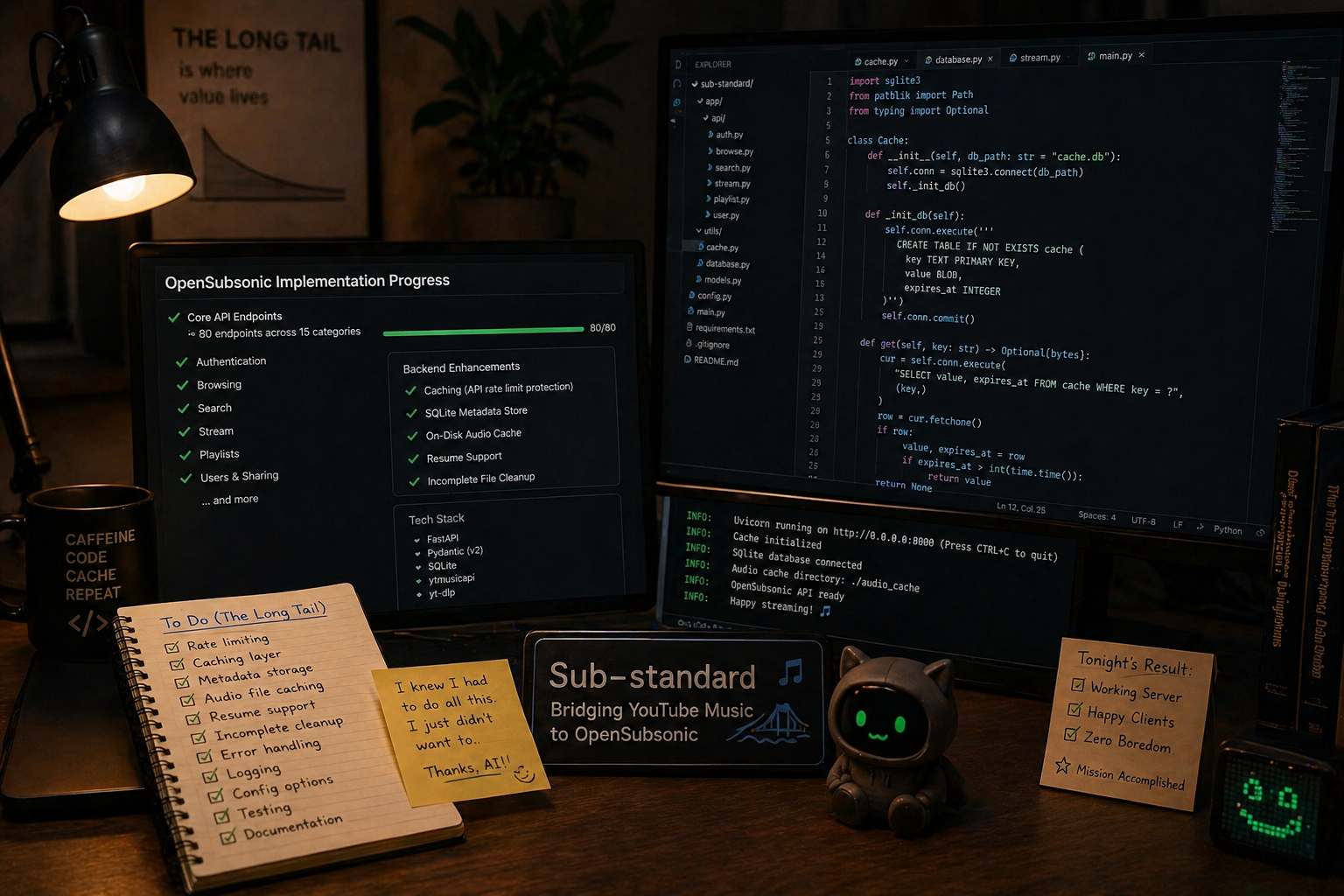

Getting the MVP was the easy part, but the real challenge was dealing with the “long tail” of tedious tasks to make the project actually usable. The OpenSubsonic interface has about 80 routes divided into 15 categories. To make my server fully functional, I asked the AI to implement a simple caching mechanism to avoid exceeding YouTube API usage limits, and to use SQLite databases to store music metadata.

The work also included saving songs to the disk while streaming to avoid repeated downloads, with special code added to clean up incomplete files if the connection dropped before the download finished. These were all things I knew had to be done to make my old project useful, but I never did them because they were burdensome. In one short evening, thanks to AI, I managed to have a service that works perfectly, and I jokingly named it “Sub-standard.”

Is this approach healthy for programmers?

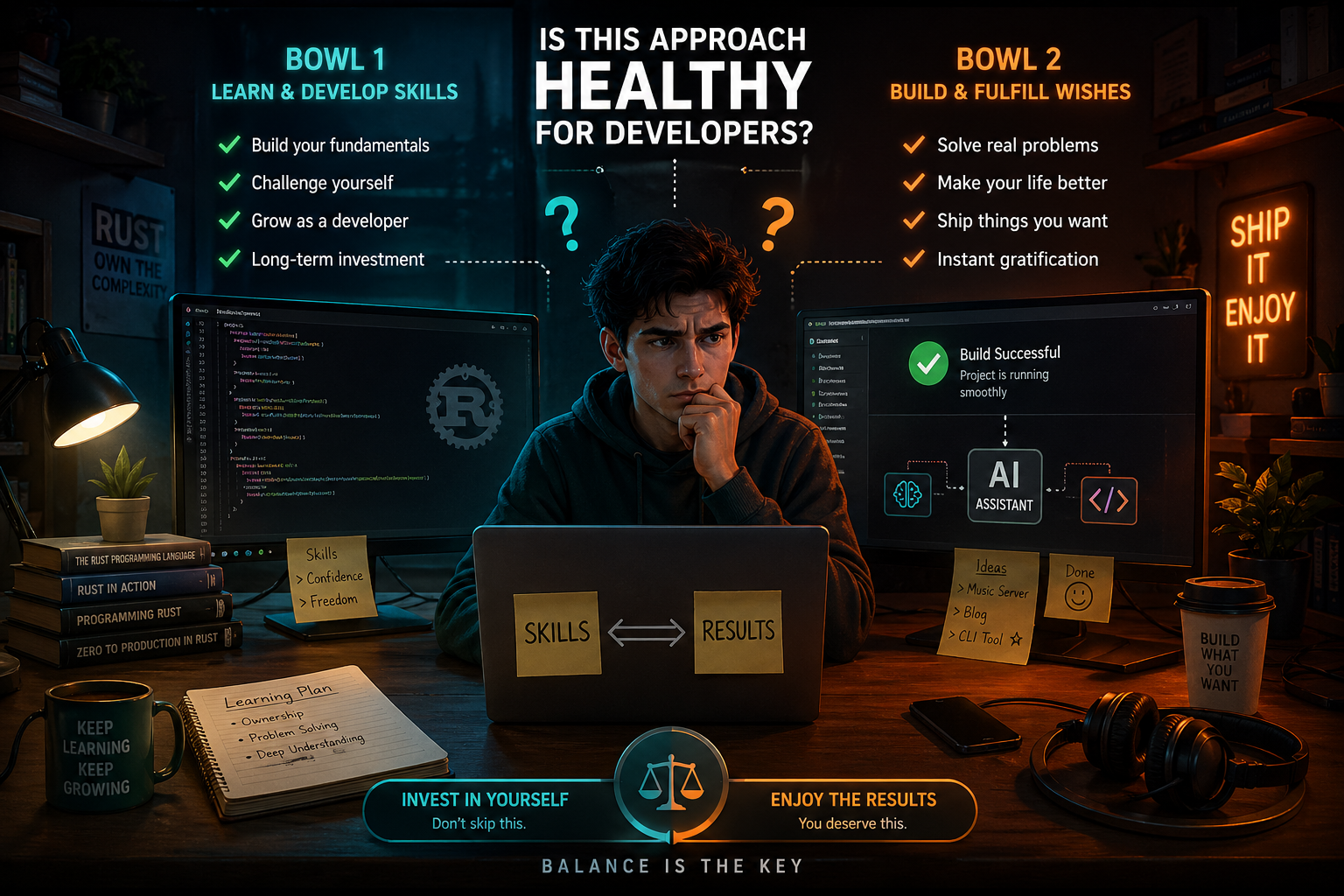

I don’t want to sound like a blindly enthusiastic promoter of AI programming. I still have concerns about losing programming skills due to over-reliance on these tools. That is why I still insist on trying to learn a complex language like Rust through my own personal effort. We should categorize our personal projects into two buckets: the first is “things I do to learn and develop my skills,” and the second is “things I really wish existed and were available to me.”

This project clearly falls into the second bucket. Using AI to realize these projects is a form of fulfilling desires. I would never have finished it with my own effort, but now, I have a ready project that works efficiently, and a metaphorical book that has been read instead of being left to gather dust on the shelf. The important thing in the end is not just completing the projects you wish for using AI, but ensuring that you are still investing your time in the first bucket of projects to develop your own skills.

Source:

Leave a Reply