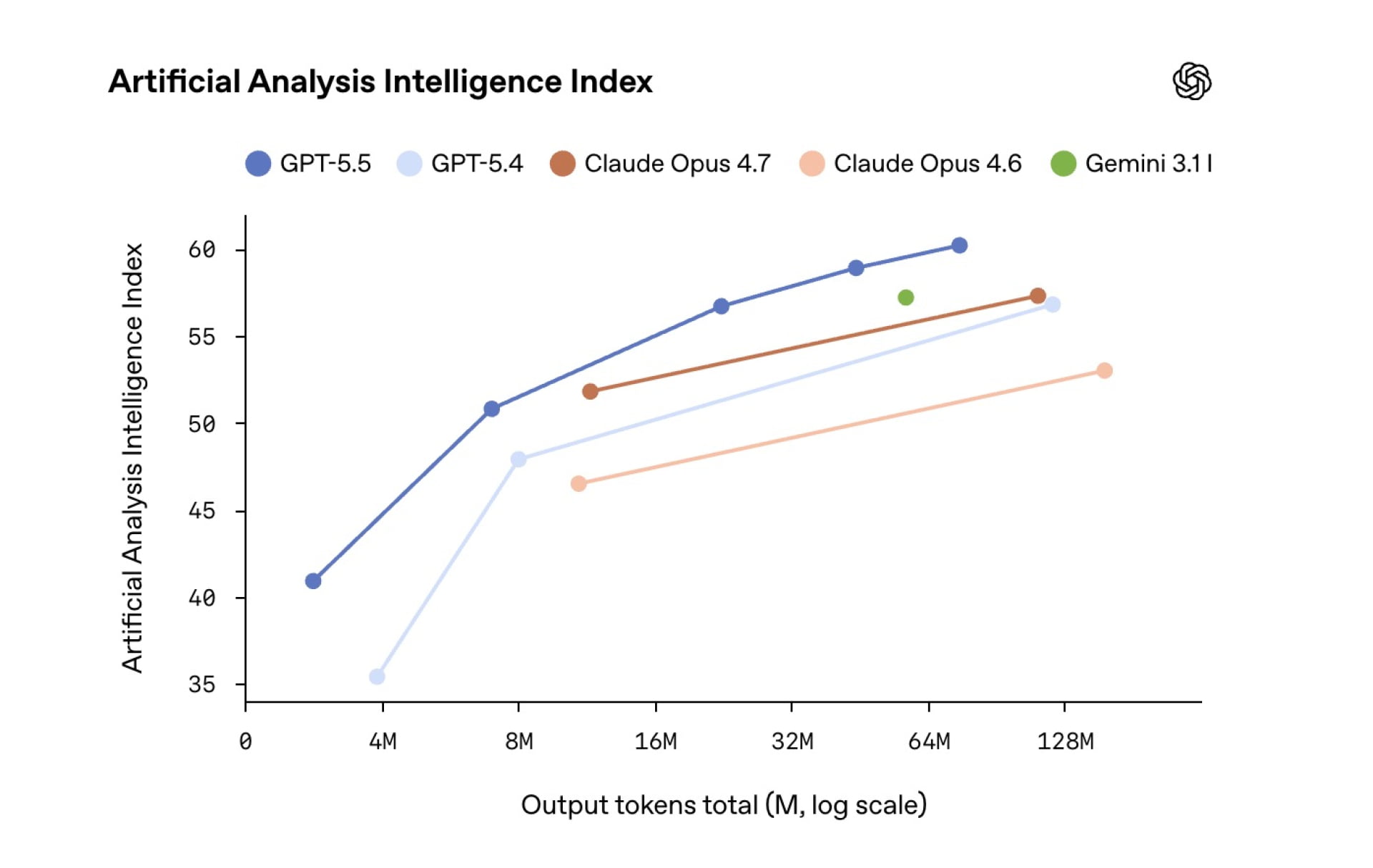

In a move that redefines what machines are capable of, OpenAI has unveiled its new GPT-5.5 model, which focuses primarily on programming, autonomous computer use, complex cognitive task completion, and scientific research support. The company confirmed that the new model can handle messy, multi-part tasks with greater autonomy while maintaining the same response speed (token latency) seen in the GPT-5.4 version. It even uses fewer tokens for Codex tasks, making it more efficient and intelligent.

Programming Capabilities That Shape the Future

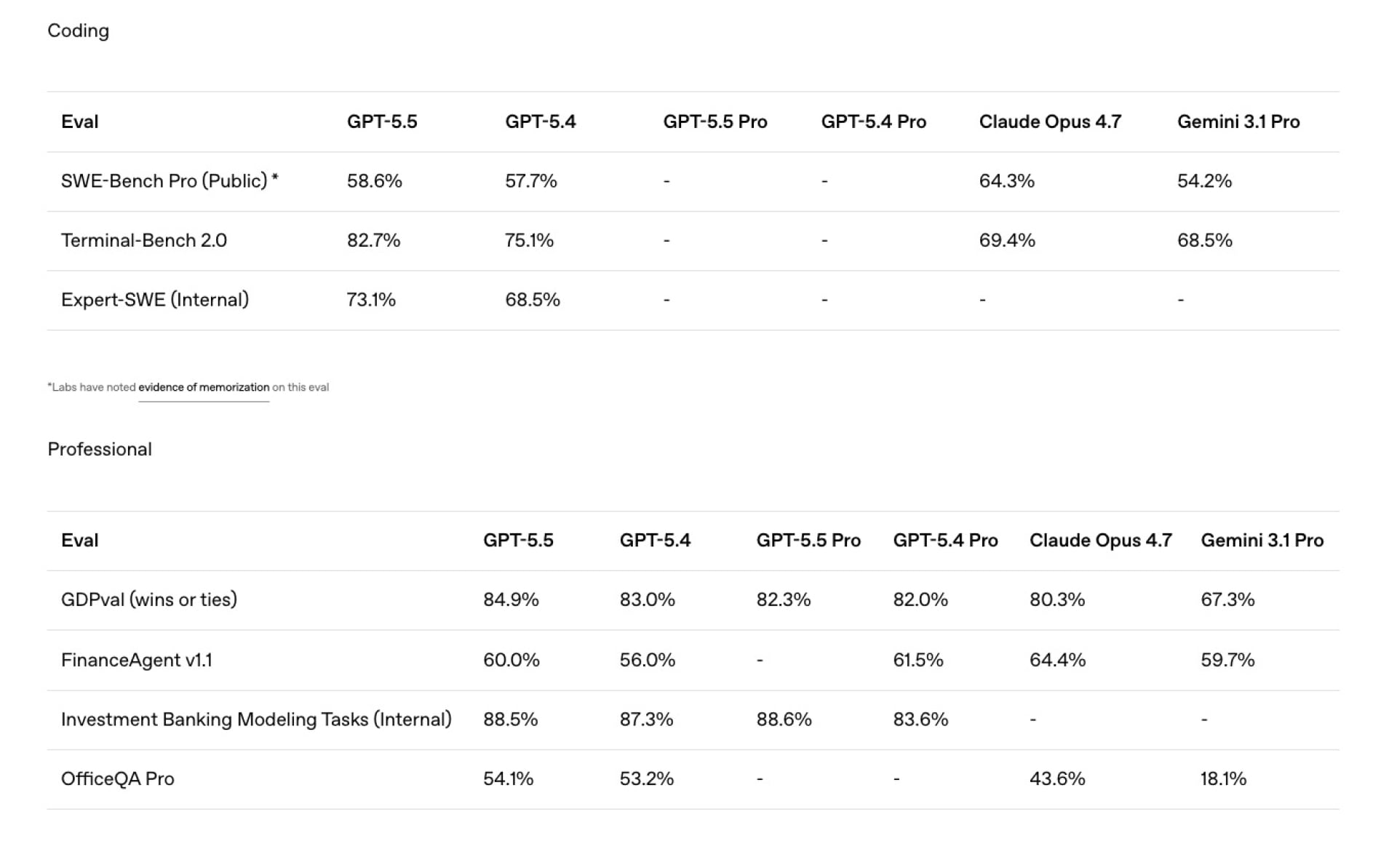

OpenAI describes GPT-5.5 as the most powerful agentic coding model it has developed to date. In the Terminal-Bench 2.0 test, which measures complex workflows via the command-line interface, the model recorded a stunning leap, achieving 82.7% compared to 75.1% in the previous generation. In the SWE-Bench Pro environment, which evaluates AI’s ability to solve real-world problems from GitHub, the model achieved 58.6%, confirming its ability to solve tasks from start to finish in a single pass better than ever before.

That is not all; early tests showed exceptional performance in long-term programming tasks, handling ambiguous failures, and applying radical changes across massive codebases without the model losing focus or context.

Advanced Computer Use and Scientific Research Support

This update pushes AI to penetrate deeper into the core of office work. OpenAI says GPT-5.5 outperforms its predecessor in creating documents, spreadsheets, and presentations. Its computer-use capabilities now allow it to navigate between different tools, verify results itself, and interact with user interfaces seamlessly. A vivid example of this is that OpenAI’s internal finance team used this model to review 24,771 tax forms consisting of 71,637 pages, which helped accelerate the process by two full weeks compared to last year.

On the research front, the model showed significant improvement in the GeneBench test and scored 80.5% in BixBench, a performance that leads currently published models. Even more surprisingly, a custom internal version of GPT-5.5 helped discover a new mathematical proof related to non-diagonal Ramsey numbers, a scientific achievement that was later verified by the Lean system.

Infrastructure and System Protection

OpenAI attributes this leap in performance to massive infrastructure work in collaboration with NVIDIA systems. GPT-5.5 was designed, trained, and run on cutting-edge NVIDIA GB200 and GB300 NVL72 systems. The model itself even helped analyze production data to innovate new load-balancing algorithms that increased token generation speed by over 20%. Of course, the company did not overlook the security aspect, as the model’s capabilities in biological, chemical, and cybersecurity fields were classified as high-risk, with strict restrictions imposed to prevent misuse.

Naturally, all this development makes us wonder as Apple fans: when will we see deeper integration of AI into the Apple ecosystem? We know that Apple is collaborating with Google to bring Gemini to support Siri’s capabilities, but the speed of OpenAI’s model development puts immense pressure on all competitors in Silicon Valley.

GPT-5.5 Rollout Begins for ChatGPT and Codex Subscribers Across Multiple Plans

The GPT-5.5 model is available today for subscribers of (Plus, Pro, Business, and Enterprise) plans via the ChatGPT and Codex platforms. The GPT-5.5 Pro version has also been launched for business and professional subscribers, with both versions coming soon via the API. As for the Codex platform, the model has been made available to Education (Edu) and (Go) plan subscribers with a massive text capacity of up to 400,000 tokens (Context Window), in addition to providing a “Fast Mode” that features text generation one and a half times faster, albeit at a higher cost.

Source:

Leave a Reply