It seems the “do whatever you want” era in the world of artificial intelligence is drawing its final breath, or at the very least, it is beginning to fall under the scrutiny of sleepless eyes. In a move that reflects the sensitivity this sector has reached, Google, Microsoft, and xAI (owned by Elon Musk) have agreed to grant the US federal government early and exclusive access to the new AI models they are developing, before they reach the hands of us, the average users.

Early Cooperation Agreement: National Security First

This agreement did not come out of thin air; it is the result of increasing pressure from the US Department of Commerce. The department’s Artificial Intelligence Safety Institute (AISI) will evaluate the new models developed by these tech giants. The stated goal is to carefully study these technologies to understand their capabilities and potential risks to national security before they are released to the public, reflecting growing concern that AI could become a double-edged sword.

Chris Fall, director of the AISI, stated that rigorous and independent scientific measurement is essential to understanding the limits of AI and its security implications. Interestingly, the agreement stipulates that models be provided to the center with reduced or even disabled standard “safeguards,” enabling specialists to examine the models in depth and determine if they are capable of actions that could threaten national security if they fell into the wrong hands.

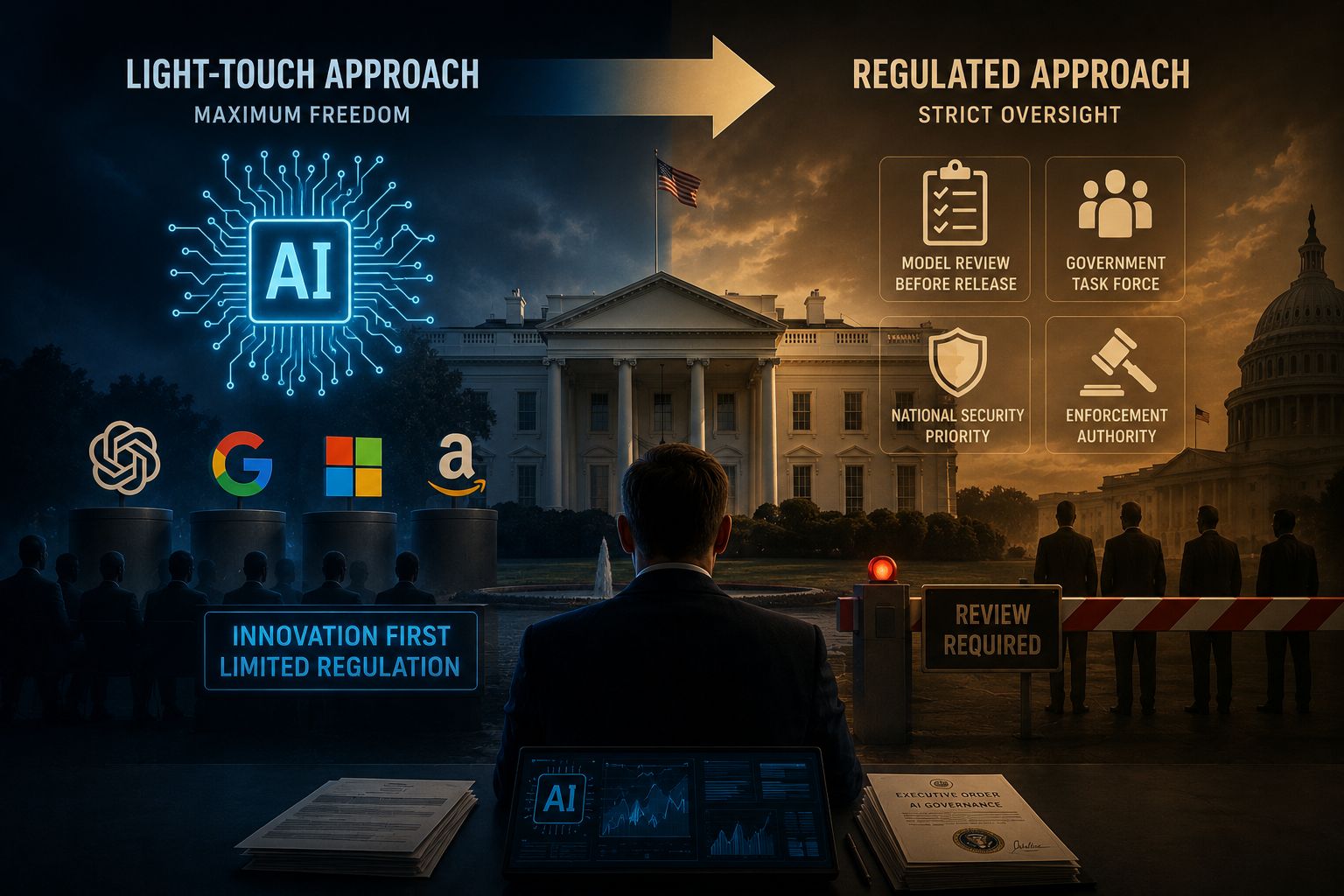

A Shift in Regulatory Policy: From Absolute Freedom to Strict Oversight

This shift comes after reports indicating that the Trump administration is considering tightening oversight of the AI industry, despite previous promises of a more liberal approach. It appears the White House is now leaning toward creating a working group to oversee the development of future models, with the authority to review them before official release. This trend represents a radical change in how the government interacts with tech companies, as the President seeks to impose his will to ensure these technologies align with the national vision.

Looking back, this tension is not new; we previously witnessed a conflict between the Pentagon and Anthropic, where the Department of Defense sought to classify the company as a supply chain risk after the latter insisted on placing restrictions preventing the use of its technology in mass surveillance or autonomous weapons. It seems other companies like Google and Microsoft have preferred “voluntary cooperation” and signing agreements rather than entering into a direct clash with the administration that could end in even stricter restrictions.

Source:

Leave a Reply